December 15, 2025

December 15, 2025

The AI Adoption Gap: A Human Issue

The bottleneck around AI adoption isn't technical, it's human.

The bottleneck around AI adoption isn't technical, it's human.

The knowledge gap

Most enterprises aren’t struggling with AI because they picked the wrong model, vendor, or architecture. They are struggling because adoption among employees doesn’t scale.

AI adoption is hard due to a knowledge gap:

End users don’t know what’s possible in their workflows

AI teams lack direct visibility into demand

Leadership sees activity, but limited measurable impact

The result? Low adoption with among other: employees disengaging with AI, AI teams with little clarity for decisions, and leadership that spends many resources, without measurable results. Companies that close this gap will outperform others.

Most enterprises aren’t struggling with AI because they picked the wrong model, vendor, or architecture. They are struggling because adoption among employees doesn’t scale.

AI adoption is hard due to a knowledge gap:

End users don’t know what’s possible in their workflows

AI teams lack direct visibility into demand

Leadership sees activity, but limited measurable impact

The result? Low adoption with among other: employees disengaging with AI, AI teams with little clarity for decisions, and leadership that spends many resources, without measurable results. Companies that close this gap will outperform others.

The importance of end-users

End-users are central to AI adoption, since AI only creates value when it works in personal workflows. The problem: end-users are domain experts on their work, but don’t know how AI can effectively contribute.

That’s why upskilling matters, but generic training doesn’t scale for personal workflows. Needs differ across roles, teams, and even individuals. Therefore, to obtain AI adoption at scale, there’s a need for personalised enablement. Providing this in a human-like manner at scale, with a central point for governance, is the challenge.

End-users are central to AI adoption, since AI only creates value when it works in personal workflows. The problem: end-users are domain experts on their work, but don’t know how AI can effectively contribute.

That’s why upskilling matters, but generic training doesn’t scale for personal workflows. Needs differ across roles, teams, and even individuals. Therefore, to obtain AI adoption at scale, there’s a need for personalised enablement. Providing this in a human-like manner at scale, with a central point for governance, is the challenge.

The AI team as central hub

AI teams need clarity to act as the central hub for governance and enablement. There’s a clear mandate: enable AI adoption at scale while maintaining oversight. In practice, AI teams often operate with incomplete signal related to ad-hoc requests, priorities with a lack of data, or limited context on the actual work and needs of end-users.

Without consistent visibility and a way to gain insights into needs, AI teams get stuck being reactive to requests, instead of acting as a central hub for high-impact use-cases and a coherent roadmap.

AI teams need clarity to act as the central hub for governance and enablement. There’s a clear mandate: enable AI adoption at scale while maintaining oversight. In practice, AI teams often operate with incomplete signal related to ad-hoc requests, priorities with a lack of data, or limited context on the actual work and needs of end-users.

Without consistent visibility and a way to gain insights into needs, AI teams get stuck being reactive to requests, instead of acting as a central hub for high-impact use-cases and a coherent roadmap.

The operating model of leadership

Leadership is managing the opportunity cost. Moving too slow with AI adoption leaves efficiency gains (and thus increased revenue and decreased costs) on the table.

However, moving too quickly with an operating model that doesn’t scale creates wasted resources, in terms of money, time, and effort. Most importantly, it creates internal scepticism to AI that makes future adoption harder.

Therefore, for leadership the challenge is in deciding the optimal operating model for AI adoption. Selecting the right one will result in sustained, measurable adoption among employees, and thus resources spent with a clear ROI.

Leadership is managing the opportunity cost. Moving too slow with AI adoption leaves efficiency gains (and thus increased revenue and decreased costs) on the table.

However, moving too quickly with an operating model that doesn’t scale creates wasted resources, in terms of money, time, and effort. Most importantly, it creates internal scepticism to AI that makes future adoption harder.

Therefore, for leadership the challenge is in deciding the optimal operating model for AI adoption. Selecting the right one will result in sustained, measurable adoption among employees, and thus resources spent with a clear ROI.

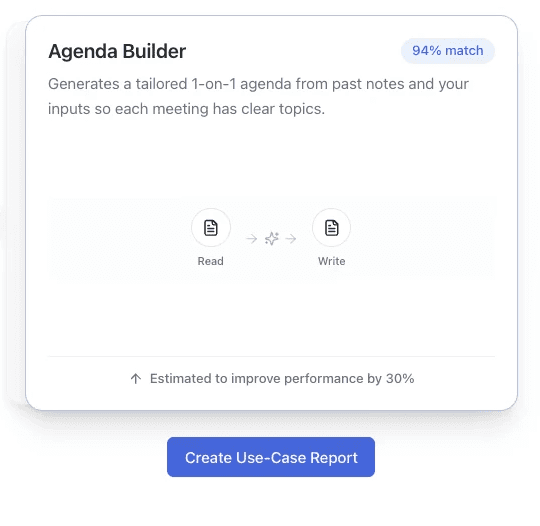

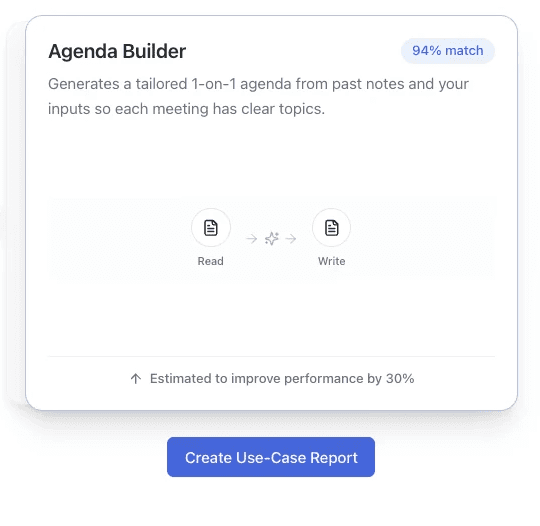

How Novo helps

Novo closes the gap between end-users, AI teams and leadership by turning personal context into use-case recommendations. If you’re curious how to obtain a scalable AI adoption operating model, and want to compare approaches, you can reach us at juliandeklerk@novosolutions.ai

Novo closes the gap between end-users, AI teams and leadership by turning personal context into use-case recommendations. If you’re curious how to obtain a scalable AI adoption operating model, and want to compare approaches, you can reach us at juliandeklerk@novosolutions.ai